🚀 1 Motivation & Problem

Humans understand the physical world through structured mathematical abstractions. From Isaac Newton’s formulation of universal gravitation inspired by a falling apple, to modern physics, quantitative laws enable precise reasoning about the dynamics of the real world. In contrast, although state-of-the-art AI systems demonstrate remarkable capabilities in mathematical reasoning, programming, and scientific writing, enabling artificial intelligence to ground its understanding in the physical world remains a fundamental and unresolved challenge. This limitation poses a critical barrier to deploying AI systems in real-world, embodied environments.

Modern large language models (LLMs) are predominantly trained under the next-token prediction paradigm, which implicitly encourages models to capture statistical regularities in data. A natural extension toward building world models is to train systems to predict future states—such as future frames in videos or evolving spatial configurations. While such approaches can improve perceptual modeling and temporal prediction, they do not necessarily lead to a true understanding of physical laws. Instead, models may learn to imitate surface-level patterns in visual data without acquiring the underlying causal and quantitative structure of the physical world.

This limitation can be intuitively understood by analogy to human cognition. If humans were to perceive the world purely through passive observation, without forming explicit conceptual or physical knowledge, their behavior would be driven by superficial correlations rather than grounded reasoning. As a result, actions would lack an understanding of physical consequences (e.g., failing to infer the danger of falling from a height), reflecting a gap between perception and cognition. Similarly, current AI systems often rely on learned statistical priors rather than principled physical reasoning.

To mitigate this issue, prior work has introduced large-scale datasets in the form of Visual Question Answering (VQA) to inject world knowledge into models. However, such approaches remain insufficient for evaluating true physical understanding.

- Problem: Existing benchmarks for physical world understanding are predominantly VQA-based and qualitative. These evaluations often reduce reasoning to discrete answer selection or linguistic plausibility, which can be solved via pattern matching rather than genuine physical inference.

- Insight: To address this limitation, the authors introduce a new paradigm that evaluates quantitative physical reasoning, focusing on whether models can infer numerical kinematic properties (e.g., size, velocity, acceleration) from visual inputs.

💡 2 Methodology

2.1 Task Formulation

The paper formulates a kinematic inference task for evaluating physical reasoning in vision-language models. Given a video and a single physical prior (e.g., size $\bold{S}_t^{\text{world}}$, velocity $\bold{V}_t^{\text{world}}$, or acceleration $\bold{A}_t^{\text{world}}$), the model is required to estimate another physical quantity of an target object in real-world units.

| Component | Definition | Measurement Units |

|---|---|---|

| Pixel Space | Observable quantities derived from video frames | [pixel], [pixel/s], [pixel/s²] |

| World Space | Physical quantities in real-world coordinates | [m], [m/s], [m/s²] |

| Scale Factor (γ) | Mapping between pixel space and world space | [m/pixel] |

Given a video capturing the translational motion of a target object under a fixed camera, the object's position in pixel space, denoted as $\mathbf{X}_t^{\text{pixel}}$, can be obtained at each time step $t$ from the frames. Based on the resulting discrete trajectory, the velocity and acceleration in pixel space can be estimated using finite difference approximations:

$$ \bold{V}_t^{\text{pixel}}\approx\frac{\bold{X}_{t+\mathrm{d}t}^{\text{pixel}}-\bold{X}_t^{\text{pixel}}}{\mathrm{d}t}; \bold{A}_t^{\text{pixel}}\approx\frac{\bold{X}_{t+2\mathrm{d}t}^{\text{pixel}}-2\bold{X}_{t+\mathrm{d}t}^{\text{pixel}}+\bold{X}_t^{\text{pixel}}}{\mathrm{d}t^2}. \tag{1} $$To convert these pixel-based measurements into real-world physical quantities, a scale factor $\gamma$ is introduced, which maps pixel space to world space. The relationship can be expressed as follows:

$$ \bold{S}_t^{\text{world}}=\gamma \cdot \bold{S}_t^{\text{pixel}}; \bold{V}_t^{\text{world}}=\gamma \cdot \bold{V}_t^{\text{pixel}}; \bold{A}_t^{\text{world}}=\gamma \cdot \bold{A}_t^{\text{pixel}}. \tag{2} $$Thus, we can compute the kinematic properties from the video and these priors.

2.2 Benchmark Design

For comprehensively evaluate of the kinematic movements above, QuantiPhy include video-question pairs along three primary axes. The first two axes define the core reasoning task:

- Dimensionality: {2D, 3D}. 2D movement assumes motion strictly in the x-y plane (constant depth), while 3D movement includes the z-axis (varying depth), making it intrinsically more challenging.

- Physical prior: {Static, Dynamic}. The Static prior provides constant object size $\bold{S}^{\text{world}}$ throughout the video, while the Dynamic prior provides velocity $\bold{V}_t^{\text{world}}$ or acceleration $\bold{A}_t^{\text{world}}$ at a given timestep $t$.

These two axes yield four tasks: 2D-Static (2S), 2D-Dynamic (2D), 3D-Static (3S), and 3D-Dynamic (3D). The data statistic of QuantiPhy benchmark is presented in Table 2.

| Category | Value | Description |

|---|---|---|

| Total Videos | 569 | Unique video samples collected from multiple sources |

| Total QA Pairs | 3,355 | Video-question pairs with numerical ground truth |

| Task Types | 4 | 2D-Static, 2D-Dynamic, 3D-Static, 3D-Dynamic |

| Video Duration | 2–3 seconds | Typical length of each video clip |

| Data Sources | 3 | Blender simulation, lab capture, internet videos |

| Storage Size | ~115 MB | Total dataset size after processing |

2.3 Data Construction

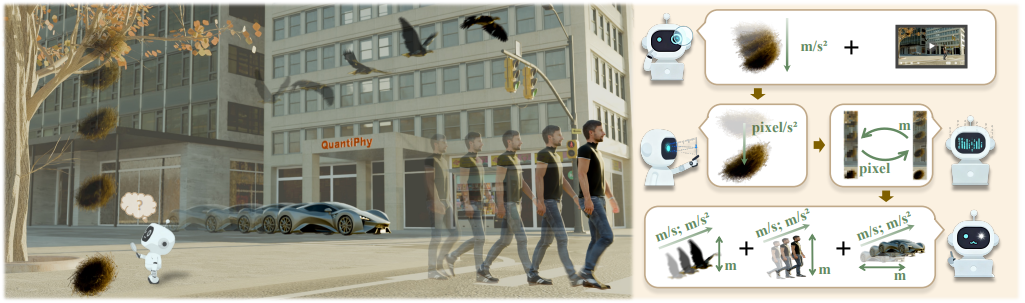

QuantiPhy employs a three-stage construction pipeline that balances experimental control with real-world diversity. As illustrated in Figure 2.1, the authors integrate synthetic simulation, controlled laboratory capture, and in-the-wild internet videos to create a comprehensive evaluation benchmark.

Stage 1: Data Collection. The authors source videos from three complementary channels to ensure broad coverage of physical scenarios:

| Source | Quantity | Key Characteristics | Primary Use Case |

|---|---|---|---|

| Blender Simulation | 300 videos | Full physical control; precise ground-truth; scalable scene variation | Controlled experiments; counterfactual testing |

| Lab Capture | 112 videos | Real-world physics; 4D metric reconstruction; calibrated multi-view | Real sensor validation; depth-varying 3D motion |

| Internet Scraping | 72 videos | Natural scenes; diverse distributions; uncontrolled conditions | Out-of-distribution evaluation |

| Segmented (SAM2) | 85 videos | Isolated objects on plain backgrounds; background ablation | Scene complexity analysis |

Blender Simulation enables precise control over object kinematics, camera parameters, and scene composition. They render scenes using Cycles/EEVEE engines with varying resolutions (1920×1080, 1080×1080, 480×960), frame rates (24–120 fps), and lighting conditions. Motion types include: (i) keyframed animation for articulated objects (humans, animals), and (ii) physics-driven simulation for rigid-body dynamics with Newtonian constraints.

Lab Capture utilizes four Orbbec Femto Mega RGB-D cameras arranged in multi-view stereo configuration. They capture diverse motions including free fall, sliding, pendulum oscillation, and bouncing across small-scale (desk-top) and large-scale (room-scale) setups.

Internet Videos are manually curated from open-source platforms and author-recorded footage, strictly filtered for static camera, translational motion, and visible reference objects. All identifiable information (faces, license plates) is anonymized via blurring.

Stage 2: Data Annotation. They employ source-specific annotation protocols to extract precise kinematic ground truth:

| Source | Annotation Method | Extracted Quantities | Precision |

|---|---|---|---|

| Blender | Automated Python scripts querying scene graph | Size, displacement, velocity, acceleration, depth | Exact (floating-point) |

| Lab | UI-assisted depth clicking + multi-view triangulation | Metric depth, 3D trajectory, instantaneous velocity/acceleration | ±1 cm (depth camera limited) |

| Internet | Interactive pixel measurement tool + reference scaling | Pixel kinematics → world units via γ estimation | Approximate (reference-dependent) |

Stage 3: Task Formulation. Each video is associated with multiple (prior, question, ground-truth) triplets following the kinematic inference framework:

| Field | Description | Example |

|---|---|---|

| video_id | Unique identifier | simulation_0032 |

| video_type | 4-character code: [Prior][Dim][Objects][Background] | A3MC (Acceleration, 3D, Multiple objects, Complex) |

| inference_type | Prior dynamics → Target dynamics (S=static, D=dynamic) | DD (Dynamic prior → Dynamic target) |

| ground_truth_prior | Provided physical constant with unit | gravity acc = 9.8 m/s² |

| depth_info | Temporal depth annotations (3D tasks only) | t=1s, distance_ball_camera = 1.4020m |

| ground_truth_posterior | Numerical answer (unit specified in question) | 2.86 |

The four-character video type code systematically encodes task complexity:

- 1st character: S (Size prior), V (Velocity prior), or A (Acceleration prior)

- 2nd character: 2 (2D planar motion) or 3 (3D depth-varying motion)

- 3rd character: S (Single object) or M (Multiple objects requiring relational reasoning)

- 4th character: X (Plain background), S (Simple texture), or C (Complex scene) This schema yields 36 fine-grained categories (e.g., A2SX, V3MC), each populated with ≥4 videos to ensure statistical validity. The final dataset comprises 569 unique videos and 3,355 question-answer pairs, with 2D:3D ratio of approximately 4:3 and balanced distribution across inference types.

🛠️ 3 Evaluation Protocol

The QuantiPhy evaluation framework is designed to rigorously assess Vision-Language Models' quantitative physical reasoning through standardized prompting, robust parsing, and calibrated metrics. Their protocol addresses three critical challenges: (i) ensuring consistent model behavior across diverse architectures, (ii) extracting reliable numerical predictions from potentially verbose outputs, and (iii) measuring proximity to ground truth with appropriate tolerance for physical measurement uncertainty.

3.1 Benchmark Models

The authors evaluate 21 state-of-the-art VLMs spanning proprietary APIs and open-weight architectures to ensure comprehensive coverage of current capabilities:

| Category | Models | Key Characteristics |

|---|---|---|

| Proprietary | ChatGPT-5.1, ChatGPT-5 | OpenAI multimodal with extended CoT reasoning |

| Gemini-2.5 Pro/Flash | Google long-context video understanding | |

| Grok-4.1 (Fast Reasoning) | xAI rapid inference with reasoning optimization | |

| Claude-4.5 Sonnet | Anthropic detailed explanatory generation | |

| Open-Weight (Scaling Series) | Qwen3-VL-Instruct (2B/8B/32B) | Alibaba architecture scaling analysis |

| InternVL-3.5 (2B/8B/30B) | Shanghai AI Lab vision-language alignment | |

| Phi-4-Multimodal / Phi-3-Mini | Microsoft efficient multimodal design | |

| SmolVLM-Instruct (256M) | Ultra-lightweight edge deployment | |

| Specialized | Molmo-7B, VILA-7B, LLaVA-13B | Academic research architectures |

| MiniCPM-V 4.5, CogVLM2-Video | Native video input processing |

Deployment Configuration: Proprietary models are accessed via official APIs (OpenAI, Google, Anthropic, xAI). Open-weight models are hosted via Replicate API or self-deployed with vLLM. Temperature is fixed at 0–0.1 for deterministic outputs; token limits range from 500 (lightweight models) to 10,000 (reasoning-intensive models).

3.2 Prompting Strategy

They employ a constrained generation protocol designed to minimize output variance and enforce numerical precision:

| Component | Content | Purpose |

|---|---|---|

| [Video Frames] | Full temporal sequence at 480p resolution; all frames retained | Preserve motion dynamics; avoid temporal aliasing |

| [System Prompt] | "You are an expert video analyst specializing in physics measurements" | Establish authoritative persona; pilot-validated for adherence |

| [Ground Truth Prior] | Single physical constant (e.g., "length of yellow car = 5.67m") | Enable scale factor γ determination |

| [Depth Info] (3D only) | Temporal camera-object distances | Support depth-varying kinematic inference |

| [Question] | Target quantity with explicit unit and timestamp | Remove ambiguity in prediction target |

| [Post-Prompt] | "Output ONLY the numerical answer and unit. No explanation." | Suppress verbose CoT; enforce parseability |

Critical Design Choices:

- Temporal fidelity over spatial resolution: 480p preserves all frames; subsampling degrades velocity/acceleration tracking

- Single prior constraint: Exactly one physical constant provided to test scale transformation, not multi-factor estimation

- Deterministic decoding: Greedy sampling (temperature=0) where supported; default parameters otherwise.

3.3 Answer Retrieval and Parsing

Given model outputs ranging from concise numerical responses to extensive analytical narratives, they implement a hierarchical parsing pipeline:

| |

3.4 Evaluation Metric: Mean Relative Accuracy (MRA)

The authors adopt MRA as the primary metric, extending the design from VSI-Bench with threshold calibration for physical reasoning tasks:

$$ \text{MRA}=\frac{1}{10}\sum_{\theta\in\mathcal{C}}\mathbb{1}\bigg(\frac{|\hat{y}-y|}{|y|}\lt 1-\theta\bigg),\quad \mathcal{C}=\{0.5,0.55,...,0.95\} \tag{3} $$| Property | Description | Physical Reasoning Justification |

|---|---|---|

| Multi-threshold | 10 confidence levels (0.5–0.95) | Captures gradations of "accurate enough"; avoids binary rigidity |

| Relative error | $|\hat{y}-y|/|y|$ rather than absolute | Scale-invariant; comparable across microscopic to astronomical scenes |

| Partial credit | Linear accumulation across thresholds | 3.1m error (3% relative) rewarded; 31m error (1000% relative) penalized |

| Robustness | Indicator function rather than continuous loss | Tolerates annotation ambiguity (hair inclusion in height, rim vs. outer diameter) |

Aggregation Protocol:

- Question-level: MRA computed per (video, question) pair.

- Category-level: Average MRA across all questions in {2D-Static, 2D-Dynamic, 3D-Static, 3D-Dynamic}.

- Model-level: Unweighted mean of four category scores.

Questions with no valid numerical output after 5 retries contribute MRA = 0 to the category average.

📊 4 Experiments

4.1 Main Results

Figure 4.1 presents performance across four kinematic inference categories. ChatGPT-5.1 achieves the highest overall MRA (53.1%), marginally surpassing humans on 2D-Dynamic tasks but remaining below the human average of 55.6%. Open-weight models exhibit clear scaling effects: Qwen3-VL improves from 29.0% (2B) to 46.0% (32B), with gains most pronounced on dynamic categories requiring temporal integration.

4.2 Effect of Scene Context

The authors analyze performance across scene difficulty axes:

- Background Complexity: Performance in complex backgrounds (C, 0.40 MRA) slightly exceeds simple textures (S, 0.38) and plain backgrounds (X, 0.35). Realistic backgrounds provide additional scale reference cues (road markings, architectural elements) that aid inference.

- Object Multiplicity: Multiple-object scenes (M) consistently outperform single-object scenes (S) by 3–5 MRA points. Additional objects serve as implicit comparison standards for size and speed estimation.

🧠 5 Reflection & Inspiration

The study reveals that current vision-language models struggle with quantitative physical reasoning. Instead of relying on visual evidence and provided priors, they tend to depend heavily on pre-trained world knowledge, leading to limited numerical accuracy and poor input faithfulness.

- Pros:

- Novel quantitative paradigm: Moves beyond binary VQA evaluation to continuous numerical accuracy with MRA metric, distinguishing 3.1m error (acceptable) from 31m error (catastrophic).

- Controlled yet diverse data: Blender simulation enables exact ground-truth and systematic variation; lab capture adds real-world validation; internet data tests distribution generalization.

- Cons:

- Simplified physical scope: Restricted to translational motion of rigid objects—no rotation, deformation, fluid dynamics, or multi-body contact physics relevant to real robotics.

- Fixed camera assumption: Eliminates ego-motion ambiguity present in embodied navigation and AR/VR applications (not general scenarios).

- No possible solution: Benchmark identifies failure modes but provides no demonstration of improved training recipes or fine-tuning strategies to address the

- Inspiration:

- From perception to cognition: Personal testing on VSI-Bench confirms SOTA models maintain strong performance (e.g., Object Counting) without visual input—mirroring QuantiPhy's findings. Rather than forcing pure perception, we should architect cognitive systems that strategically arbitrate between sensing and memory, transforming VLMs from passive perceivers into active agents that know when to look and when to recall.

- Agentic system as solution pathway: Even with substantial room for base model improvement, the immediate deployment of embodied AI may benefit more from intelligent system design—explicit uncertainty quantification, selective memory retrieval, and input-confidence gating—than from waiting for perfect end-to-end physical reasoning to emerge.